When founders get stuck, they tend to blame it on circumstances or people—sometimes including themselves. But debugging problems this way often leads to more stuckness. It's like engineers blaming a computer for crashing when their code has a giant memory leak.

Still, it’s a widespread perspective. My experience running Hipmunk included times of paralysis, panic, petulance, and paranoia. As I work with founders, I see the same patterns play out. So I started to research why this happens and how to overcome it.

When I shared some early findings in a class for founders at MIT, students were surprised to see that our immune system imagines threats the way our minds do. And somehow, the model of a “biological algorithm” helped them get unstuck.

I wondered: could an algorithmic perspective address other ways leaders get stuck? The answer turned out to be yes. (Spoiler alert: there’s an algorithm for it.)

Getting there, however, required me to rebuild my understanding of the way people think and work. I’ll walk you through the algorithm I used, and then show how you can repeat this process for whatever’s keeping you stuck.

Biological Algorithmic Complexity#

It is obviously the working, the function, which is important in an individual, the structures being only instruments for the function's better performance. J.S. Huxley

I started my search looking for genetic foundations, since that’s what I learned about in high school[1]. But the more I researched, the less genes seemed to explain in every part of biology and psychology.

We now know that simply changing the voltage in certain parts of a frog embryo makes eyes grow in parts of the body they’re not supposed to. Likewise, our human immune system works in part by re-shuffling genes at random, not merely copying our inherited genome as-is[2], and the immune system in turn affects our mental functioning. So while genes can explain a lot, they aren’t enough to explain all of us.

Some researchers now look at information (i.e. data[3]) as another essential component of life. But when I share analogies with founders, it’s important for me to get the biological stories right, and neither genes nor information are the same kind of thing as paranoia. What an organism does with information determines whether it eats or is eaten. Likewise, what we do with information determines whether we’re learning, happy, or paranoid.

This points to a third building block in all living things: information-processing algorithms[4]. Below, I show that these algorithms are meaningfully distinct from their physical media (such as genes); algorithms can modify and even create new physical media. I also show that these algorithms are subject to natural selection; the “fittest” algorithms can outcompete the “fittest” genes.

This seemingly abstract point has significant practical consequences. If you want to know why you’re paranoid, my hypothesis is that it’s not (just) for the survival of your genes, but rather for the survival of the paranoia algorithm itself[5]. This shift in perspective offers novel answers to why we so often fall into bad habits—and how to make ourselves better.

Curious About Curium#

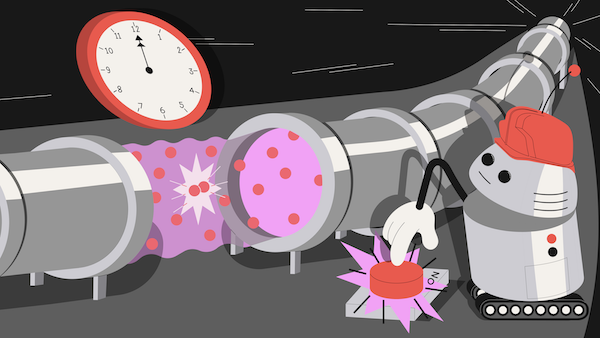

To simplify, let's start with non-living things. Suppose there’s a particle accelerator that turns one type of matter into another (e.g. curium into californium), and pushing a button starts the accelerator.

Now I write some code that makes a robot push the button when the clock strikes midnight, and you write some code that never causes the robot to push the button. We put each algorithm on a separate thumb drive, shuffle them up at random, and stick one into the robot.

We wait for midnight to arrive, and discover that the robot pushes the button.

I argue that cesium turned into californium because the robot ran my algorithm. This implies algorithms don’t (just) arise from matter, but can change matter itself.

A Crisper Example#

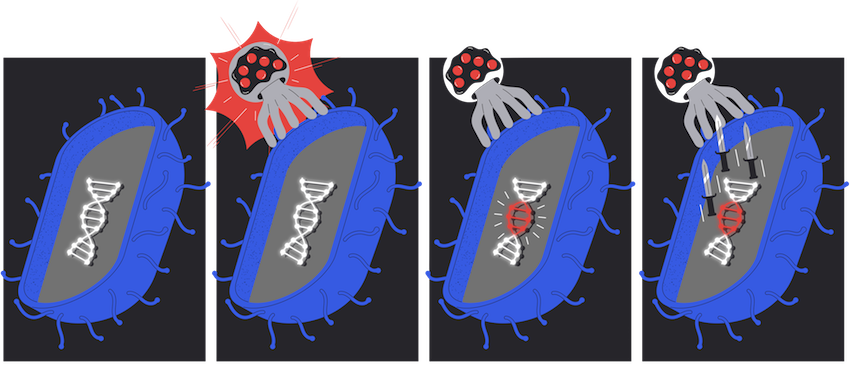

Next, let’s look at some living things: bacteria. CRISPR is part of some bacterial immune systems, allowing them to modify their own genome to directly incorporate some of the genetic material of viruses they encounter. Then, in the future, the bacteria can recognize and fight off these viruses more quickly[6].

Suppose we know that a bacterium will modify itself using CRISPR in the presence of a particular virus, and that this modification will result in proteins for fighting off future copies of that virus.

We introduce the virus into the bacterium, and then, in the moment before the bacterium “notices” the virus, we ask, “Does this bacterium currently have the DNA for fighting off future copies of this particular virus?”

Step 2: The moment of interest: A virus attacks

Step 3: The bacterium incorporates some of the virus's genome into its own

Step 4: The bacterium generates proteins to fight off the virus

The answer is no, because its DNA hasn’t changed yet[7].

Finally we ask, “Does the organism currently have the capability to fight off future copies of the virus?” And here the answer is yes.

By following instructions inherent in itself, the bacterium “knows how” to—and will—modify itself to fight off the virus.

Said differently, the organism has the algorithm for fighting off future copies of this virus before it has the proteins or genomic edits to do so. That implies that biological algorithms don’t (just) arise from DNA, but these very same biological algorithms can generate DNA as well.

A Bird-Brained Idea#

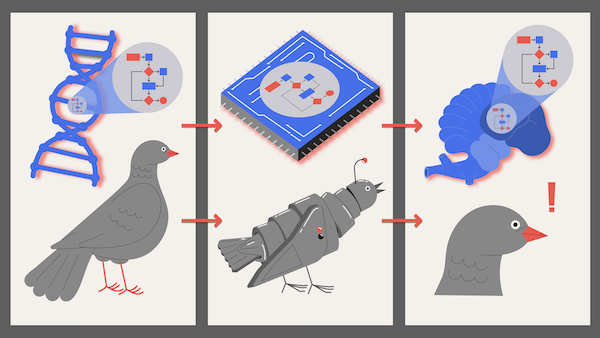

If algorithms are building blocks distinct from genes, we should expect algorithms to reproduce even when their genes don’t. And indeed, they can.

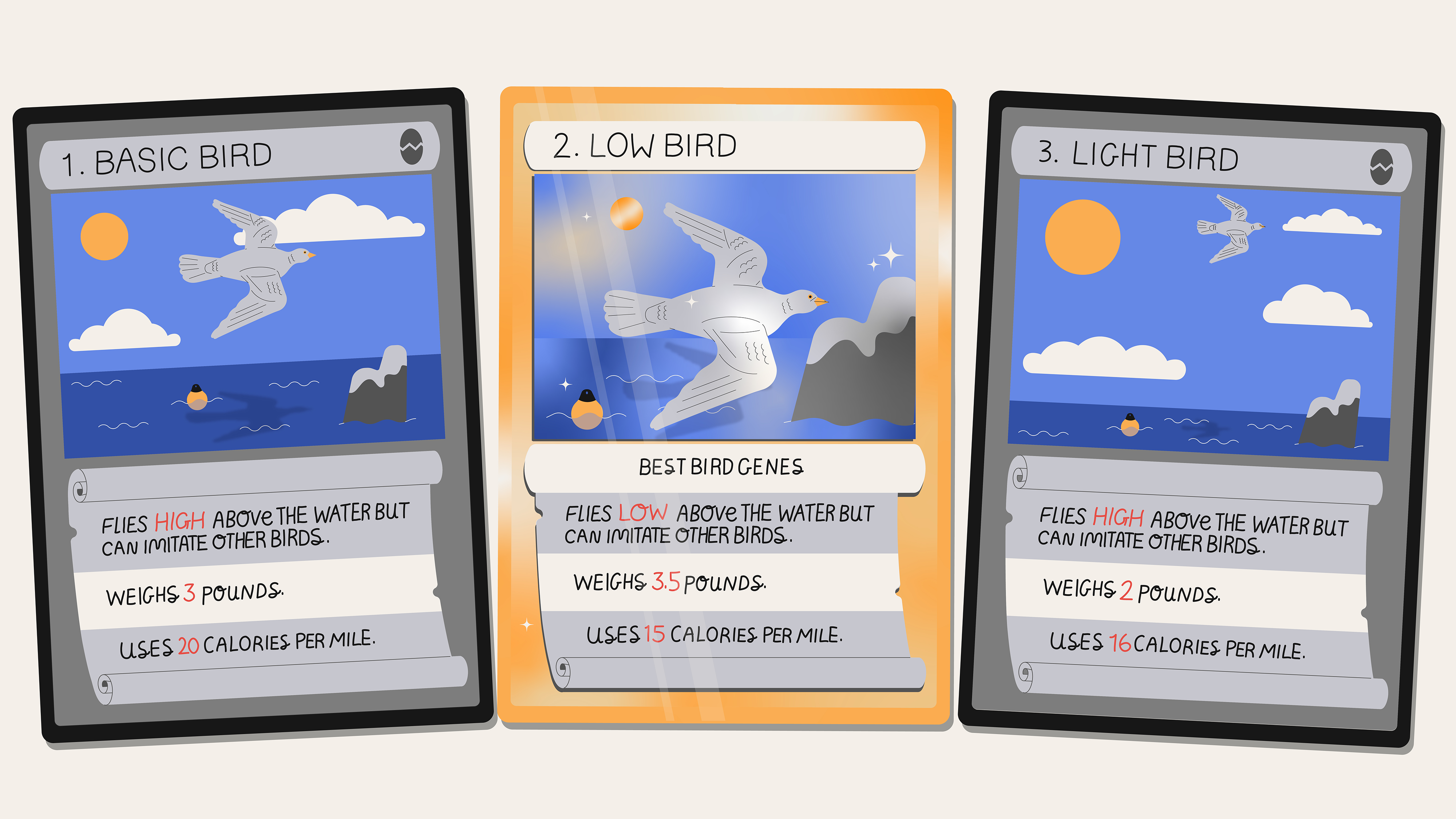

Imagine a species of bird which makes a long migration each year. These birds’ biggest risk is starving before they reach their destination, and with limited food en route, only the most energy-efficient birds survive.

Notably, these birds have the ability to override their genetically-programmed flying technique by imitating the flying of others. If imitation results in improved efficiency, the imitating bird will remember the new technique.

Now consider three variants of this species of bird which each fly in different ways, as outlined in the playing cards below.

Based on just this information, we would expect the long-term equilibrium of the species to be Low birds, which are the the most efficient because flying close to the surface reduces aerodynamic drag[8].

But now suppose a Low and a Light bird see and imitate each other’s flying. One of these imitations will result in even better efficiency: a Light bird flying low will have the advantages of both less weight and less drag.

Natural selection will therefore take a different path than genes would have predicted[9]: newly-enlightened Light birds will outcompete both Normal and Low types. The algorithm for flying low will continue to proliferate—but not the genes for it[10].

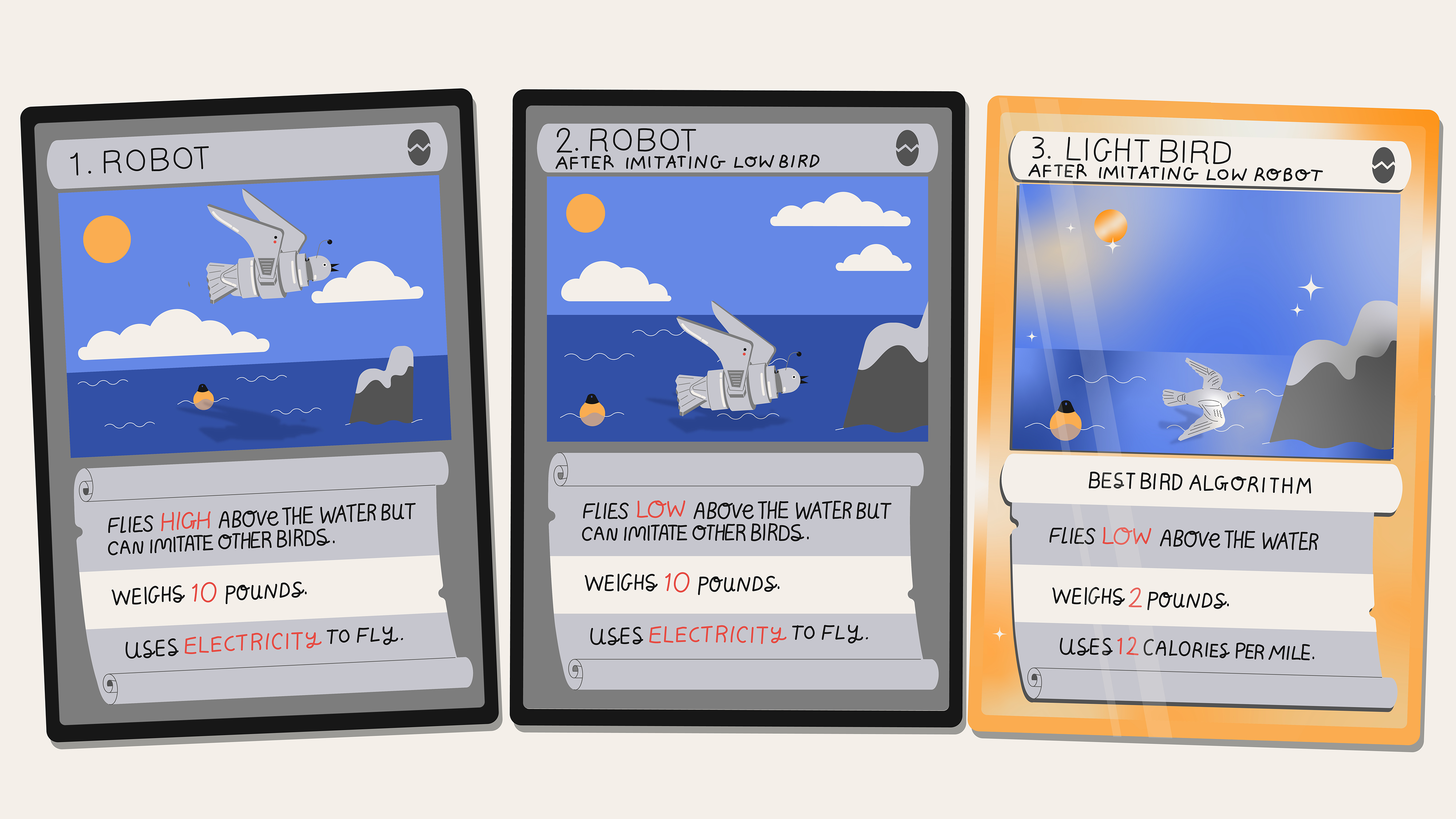

Suppose now that we introduce some “imitating robots” (which look enough like birds) into the migrating flock. Soon enough a robot will see and imitate a bird flying low, and soon enough a bird will see and imitate the robot flying low.

The “flying low” algorithm will now proliferate even more widely. Not only that, the “physical correlate" of this algorithm will appear in dramatically different media: first bird DNA, then robot silicon, and finally bird brains.

This illustrates two things. First, natural selection is acting directly on algorithms, as distinct from the genes in which the algorithm is expressed. And second, an algorithm can move between living and non-living things[11].

The Heart of the Matter#

Mathematics my foot! Algorithms are mathematics too, and often more interesting

and definitely more useful. Doron Zeilberger

To summarize: we’ve seen algorithms a) precede changes in matter, b) cause changes in matter, c) jump between non-biological and biological matter. Together, these arguments cut to the heart of the matter with the idea of building evolution on a foundation of only matter. Algorithms, too, matter.

We could, of course, try articulating some theory about how it wasn’t actually the (non-physical) algorithms that made these things happen, but rather the specific configuration of (physical) things like voltages in the robots’ memory chips[12]. We might even call those voltages information.

But this would be unsatisfying, because a set of voltages doesn’t mean anything without some context: a way of knowing whether a low voltage means 0 or 1, an interpreter for those bits in some machine language, and so on.

Said differently, the voltages in the robot’s memory aren’t themselves the flying algorithm, any more than the symbol  is the artist Prince; they are all just representations. These representations only do something when translated properly. And translating them properly requires an algorithm[13].

is the artist Prince; they are all just representations. These representations only do something when translated properly. And translating them properly requires an algorithm[13].

Implications and Intimations#

If we regard the chin as a “thing,” rather than as a product of interaction between two growth fields (alveolar and mandibular), then we are led to an interpretation of its origin […] exactly opposite to the one now generally favoured Stephen Jay Gould and Richard Lewontin

I can (and will) say a lot more about the implications of this framework for various fields of science. For now I’m going to return to where I started: human well-being.

My argument, in short, is that the most relevant unit of analysis for our minds is our information-processing algorithms—not genes, neurons, brain regions, or memes[14].

This is easiest to see in mental illness. Scientists know that rates of schizophrenia are much higher when someone has relatives with the same condition. But after decades looking, genes explained only a minority of the cases. Over time they discovered a much more subtle and sophisticated cascade, including physical factors like smoking—and also non-physical factors like social exclusion.

The new consensus on schizophrenia is that it’s caused by non-standard information processing—that is, by mis-calibrated algorithms. The same is true for personality disorders, which are also inherited at higher rates than genes alone would predict[15].

Schizophrenia and personality disorders are extreme examples, but many other human “ways of reacting” are varied, inherited, and reproduced[16]. They also survive at different rates in different contexts[17]. For example, in dangerous environments, paranoia thrives, but in safe environments, the side effects of paranoia are more likely to kill than the objects of fear.

Understanding the Human Algorithms#

What unifies much of mental illness and more mundane forms of stuckness is the mechanism of action: we catch a particular “stuck algorithm” in some way, and it starts reproducing like an invisible parasite—in us and sometimes our colleagues—for its own benefit. Dreadfully, because the algorithms have been finely honed by evolution, they even exert subtle influence on their environment to improve their odds of survival[18], often at our expense.

The good news is that while genes take generations to change, algorithms can change in a single lifetime. We can improve our experiences by modifying either our inputs or the algorithms themselves[19]. It just requires some deliberate work to find and replace them.

For most leaders (including me) the tendency when things aren’t going well is to look for something that will solve the problem: a new hire, fundraise, product launch, or pricing model. This approach, however, means reinforcing the existing algorithm’s point of view.

If we want to be better leaders, we have to examine these algorithms—including the algorithm for identifying which algorithms are working and which ones aren’t.

For example, when you’re petulant, don’t start by looking for which employee is responsible for a project delay. Instead, start by asking if your algorithm should be looking for the cause in your goals, people, processes, or you-specific factors like how well you slept the night before.

Likewise, when you're paranoid, don't start by asking if a new competitor is a major threat. Instead, ask if your algorithm for assessing risk and reward is calibrated correctly and focused on the most important threats—and re-calibrate it if necessary.

When a large customer cancels their order, don’t start by asking what you’re doing wrong. Instead, ask whether your product and sales algorithm have been targeting the kinds of customers that will make you most successful[20].

At the most general level, you can approach any leadership problem by asking what algorithms were running that led to it a) happening and b) being perceived as a problem, and then c) figuring out what algorithms need to run to change one or both. This is the algorithm for continuous improvement and stress reduction.

It works regardless of the causes, whether they be financial, emotional, interpersonal, genetic, or physical.

It works because algorithms are the matter with us.

Thanks to my pre-readers for their helpful comments: Andrew Wansley, Brian Christian, Daniel Gackle, James Somers, Jess Mah, Kimberly Cisneros, Nancy Hua, Neville Crawley, Nikhil Srivastava, Ray Goldstein, Sanjay Sarma, Uri Lopatin, and Zak Stone. And thanks to Yuval Haker for the illustrations.

Footnotes#

When I took introductory biology in high school, I was told that genes were the way that traits were encoded and passed on to the next generation. Richard Dawkins, one of the most enthusiastic proponents of this theory, argued:

If you ask what is this adaptation good for, why does the animal do this […] the answer is always, for the good of the genes that made it.

Now, in the field of systems biology, researches describe phenomena like biological circuits, which provide an abstraction for biological engineering, such as these remarkable bacteria:

[Christopher] Voigt has designed bacteria that can respond to light and capture photographic images, and others that can detect low oxygen levels and high cell density—both conditions often found in tumors.

↩︎Another example of non-genetic inheritance: When certain worm embryos are exposed to a smell after their genome is set but before birth, they are more attracted to that smell after birth—and so are their children. ↩︎

More specifically, it’s “information” as the term was used by Claude Shannon. ↩︎

While modern biologists love to talk about cells and organisms as though they are running algorithms, most think the algorithms are a useful model rather than the main object of observation. For example, a textbook for the field of evolutionary systems biology says:

Evolutionary systems biology can generate new insights into the adaptive landscape by combining molecular systems biology models and evolutionary simulations. This insight can enable the development of more detailed mechanistic evolutionary hypotheses. [emphasis mine]

Essentially, the author Orkun Soyer is saying that biological circuits and algorithms (which, in his telling, emerge as secondary effects) help us make better predictions about biological molecules (which, in his telling, are the fundamental building blocks). This is true but limited.

Richard Dawkins, author of The Selfish Gene, recognized the possibility for evolution to act on non-genetic items as well within a gene-centered system, coining the term “meme” to describe the kinds of human ideas and practices that evolve. This, too, is true but limited.

This essay puts forward a broader perspective. I argue that natural selection can, and does, a) operate on algorithms themselves, b) even in the absence of or in opposition to genes and memes, and c) that the relevant domain of algorithms is much wider than just human memes.

↩︎The algorithmic approach is thus different from both the gene-centric modern synthesis, and the organism-centric flavor of the extended evolutionary synthesis. Had Huxley had the concept of algorithms when he wrote the opening quote of this section, he might have arrived at the same conclusions of this essay, rather than getting bogged down in hierarchies of structure. ↩︎

This technique is so useful that it is now routinely used by genetic engineers to modify DNA. ↩︎

We could say the organism has the genotype for “building machinery for modifying its genotype,” i.e. that it has the genes for CRISPR, but this is not the same. To argue these are equivalent would be like arguing that a mother has the genotype of “a Y chromosome” simply because, in conjunction with a man, she can conceive a male child. What she actually has, right now, are two X chromosomes. ↩︎

For the sake of simplicity, I am ignoring the specifics of how these techniques are stored genetically and which ones are dominant or recessive. Under sufficiently strong environmental pressure, as in this example, the long-term equilibrium should be identical. ↩︎

It might be tempting to say, “This is still a kind of genetic evolution, because the birds have genes for how to imitate other birds.” But this is not persuasive for two reasons. First, the path of evolution here also depends on the Light birds having seen the Low birds—a non-genetic phenomenon. Second, all birds in question can imitate; if that phenomenon is indeed genetic (something that cannot be assumed without becoming a circular argument), the “imitation genes” are preserved regardless.

What aren’t preserved are the genes for flying low. Imagine the point in the future at which all that’s left are Light birds that have learned to fly low. Suppose a mass-extinction event kills all living birds, but some of their eggs survive and ultimately hatch. These newborn Light birds will have no low-flying exemplars to imitate, so they will fly high, as their genes dictate.

↩︎This does not mean genotype and phenotype are completely irrelevant. On the autopsy table, we can make inferences about how a bird might have flown by looking at its DNA and its physical characteristics. Still, there is a limit to what we can know postmortem. The most comprehensive perspective would have come by watching the organism’s behavior when it was alive, such as seeing how high the bird flew.

Still, even that approach is limited. Over time we learn of more and more ways that organisms behave that aren’t directly perceivable through our human senses, such as birds that use ultraviolet light to assess which offspring need the most food and giraffes that hum to each other so low we can’t hear them.

The idea of non-genetic factors influencing evolution is no longer heresy in biology (for many examples, see Evolution in Four Dimensions). This is good, but the tendency to focus on visible physical “hardware" (signaling molecules, DNA methylation, and genes) remains strong—and limiting—as compared to a view of algorithms as first-order building blocks.

↩︎This also offers the start of a new way of thinking of the transition from “inert” matter to the first life. ↩︎

Or some specific configuration of nucleotide sequences in the DNA of the birds, or some specific neural connections in the birds’ brains. ↩︎

Moreover, natural selection operates on these conventions, punishing entities that can't “correctly understand” their own encodings! Imagine, for example, that all robots encode their learned flying parameters as a list of signed integers. Now imagine that some robots correctly decode the list as signed integers, while others incorrectly decode the list as unsigned integers. The flying algorithm would appear as an identical set of voltages in both kinds’ of birds memories, but the former set would fly correctly while the latter would immediately plummet into the sea. The unfit algorithm for “interpreting signed integers as unsigned” would quickly disappear, just as chronic mis-reading of DNA leads to disease and death. ↩︎

There are several key distinctions between algorithms and memes (in the sense Dawkins used the term). First, algorithms are not necessarily stored in brains, whereas memes are. Second, algorithms are not unique to living things (or those we would typically consider living, like flying robots). Third, algorithms are not late-developing units of evolution that “emerged” from a genetic foundation, but rather (I argue) have been with life since the beginning—prior even to DNA. Lastly, whereas memes are seen as units (or inputs and outputs) of thought, algorithms include the processes that make thought and perception possible, such as sight, proprioception, sensing time, and having a concept of self. ↩︎

For a comprehensive explanation of information-processing miscalibrations and inter-generational transmission of personality disorders, see Attachment Disturbances in Adults. ↩︎

We can even model the spread of paranoia across a population. ↩︎

These four conditions—variability, inheritance, reproduction, and fitness—are viewed as the requirements for natural selection, and are traditionally applied to genes. Here we see that the requirements are also met by algorithms, even when the algorithms in question are not (solely) a function of genes. ↩︎

The idea that an organism can modify its environment to benefit its own survival is known in biology as niche construction. I am suggesting that algorithms themselves can be understood as engaging in niche construction. ↩︎

Notice what happens after you reads that sentence. However you feel, it’s because of how your algorithms are interpreting it. Don’t believe me? Look carefully: that paragraph is just pixels on a screen. ↩︎

If the answer is, “yes, we've been going after the right kinds of customers,” then this cancellation may indeed be evidence you’ve been going about it wrong. But I just as often work with startups that get anchored to the idea that whichever companies are in their sales pipeline are the right customers to go after—even when they would be better off going for a different set of customer altogether (bigger, smaller, in a different industry, etc.). In this latter scenario, a cancellation or series of cancellations should be a welcome wake-up call to change the algorithm for filling the pipeline. ↩︎